Latest Thoughts

-

📻 Pilot: What is a Photo?

Host Jason Michael Perry sits down with Joel Benge, a communications strategist and author, to ask what a photo even means in an age where AI can rewrite reality. From fake principal voicemails to AI-generated films, Perry and Benge explore how synthetic media is reshaping trust and what that means for security, family, and everyday […]

-

🧠 Why Workslop Misses the Point on AI at Work

AI-generated workslop might be an issue, but the real performance gains are being drastically understated.

AI is the great equalizer. It takes F or D level work and pushes it up to a C or even B. For recruiters and managers, that changes the signals they used to rely on. Spelling mistakes, awkward phrasing, or obvious gaps in formatting once made it easy to weed out weak candidates. AI erases those clues. Just like phishing training that teaches us to look for typos and clunky wording, the cues we’ve built BS detectors around no longer apply. Slop is moving further up the pipeline than it once did.

But the productivity gains from AI are still understated. As Ethan Mollick has pointed out, there is a growing stigma around admitting how much experts use AI. Spot an unusual phrasing or a certain punctuation mark and some people instantly dismiss the work as machine-made. That pushes AI use underground. People draft in personal tools or resort to shadow IT so they can get the benefit without the stigma. The final product looks like a polished draft, but few admit how much of it came from working alongside AI.

The reality is more people are using AI than want to own it. These tools do not replace critical thinking or fill in gaps of real experience. They are exponentially more valuable in the hands of someone who knows their domain than someone who does not. Training people to use AI to expand their value, not as a magical crutch, is the difference between slop and real output.

-

🧠 H-1B, Remote Work, and the RTO Paradox

Reading the news about a $100K fee on H-1B visas, I kept seeing the same question pop up: why hire someone on an H-1B at all instead of just building an offshore team?

Early in my career, the answer was obvious: H-1B hires let you expand the expertise of your local team and grow culture right where you sit. Outsourcing chips away at that. Building a team in another country means learning a new market, a new culture, and a whole new operating model.

For decades, offices enforced geographic restrictions. If you wanted to compete for the best jobs, you moved to the meccas like San Francisco or New York City. For some roles, that may never change. But when we push back on RTO, we also remove those restrictions. Suddenly, the best person might live anywhere, as long as they can work golden hours or travel when needed.

But here’s the twist: remote work changed everything.

I run my own business now, and while it is nice when people are local, it does not stop me from working with team members in different states or countries. I am usually looking for the best person I can afford for the role. Local is lagniappe (a little something extra), not the requirement.

That is where RTO gets interesting. For some companies and roles, being in-person may feel safer, or may even reduce the competition for jobs. For others, it might limit access to talent in ways that hurt more than it helps.

So maybe the real question is not whether RTO is good or bad, but whether the geographic restrictions it enforces are worth the tradeoff.

-

Savings Unlock Calculator

The Savings Unlock Calculator looks at AI through a different lens: time, efficiency, and “salary not spent.” It shows how much capacity your team can unlock without adding headcount by freeing up FTEs, saving hours, and raising efficiency. The point isn’t just cost-cutting, it’s about finding new room to grow with the team you already have. Try it out!

-

Growth Unlock Calculator

I built this Growth Unlock Calculator to test how AI-driven productivity gains could flow directly into top-line revenue. By plugging in team size, average revenue per employee, and adoption rates, you can see how different impact levels translate into potential growth. Try it out!

-

🧠 AI Is Making Questionable Food Look Delicious

Some of the best AI use cases aren’t flashy, they’re just quietly helpful.

If you’ve ever ordered from DoorDash or Uber Eats, you’ve probably seen some truly questionable food photos. Now, Uber’s using AI to re-plate dishes, enhance low-quality images, and summarize reviews into clear, useful descriptions.

-

🧠 AI Pricing Isn’t the Problem

AI use cases aren’t always about novelty. Sometimes the power is simple: process more information, make better decisions, and act immediately. That’s exactly what sparked controversy last week when Delta announced plans to use AI to personalize airfare pricing. After public pushback, Delta clarified that it was using a partner to dynamically adjust prices based on demand and competitors, something airlines have done for decades.

What’s changed is the speed.

Before AI, we saw the same pattern in retail stores like Best Buy and Walmart rolling out e-ink price labels to make price changes cheaper, faster, and less error-prone. What used to take days now takes minutes. These systems weren’t about AI. They were about enabling action at scale.

Today, companies like are building AI-powered pricing systems that go even further, integrating with ERP and supplier data to adjust prices in real time. Working with groups like PerryLabs, they’re pushing updates across hundreds of products or stores multiple times a day. When margins shift due to something like a tariff change or supplier shortage, the system responds. Fast. Strategically. Without waiting for a human in the loop.

That’s the pattern: AI isn’t changing how business is done or how pricing has worked for centuries, it’s just enabling those decisions to happen faster than ever before.

-

🧠 We’re Still in the AOL Days of AI

AOL launched in 1983, Amazon didn’t show up until a decade later, and Google nearly two decades. That’s the kind of timeline we’re on with AI, not just early, but early enough that we still haven’t figured out how to use it at work.

According to a new AP poll, 60% of U.S. adults have used AI to search for information, but only 37% have used it at work. The gap isn’t about capability, it’s about confusion. Companies are rolling out vague governance policies that say, “don’t use ChatGPT with company data,” but then fail to offer secure, internal tools connected to their systems. The result? No context, no value, and no adoption.

When my team at PerryLabs talks with companies, we see it again and again: well-meaning governance that blocks data access, without a real plan to replace it. That creates hallucinations, frustration, and a quiet surge in shadow IT as employees turn to whatever tools they can find. It’s like choosing not to give your team a performance boost, and acting surprised when you fall behind.

-

🧠 The hardest part of AI right now? Making the promise possible.

Walmart’s move toward super agents is one of the clearest examples of where this space is heading. Agents that don’t just answer questions, but take action. These aren’t JUST chatbots. They’re orchestrators: agents that talk to other agents, trigger workflows, and pull the right data at the right time to get real work done.

But you’ll notice something missing, details on how they’re actually doing it.

Everyone’s using the buzzwords, super agents, orchestration, real-time, action layers, but the tooling to make it all work takes work to build. Its not a data lake, and its definitely not plug-and-play.

In The AI Evolution, I point to data lakes as a foundational layer, and they are. But they’re built for reporting, not action. What agentic AI needs is a layer that’s both readable and executable, with access to real-time context and permissions.

If you’re taking with companies that aren’t saying this you’re building a huge data swamp, that won’t unlock the things that Walmart ays they have. The reality is that most teams are duct-taping workflows together with brittle APIs or pushing dashboards behind a chat interface and calling it an agent.

That’s the space I’m often finding our work at PerryLabs. Not just demoing agents, but building the underlying layers to actually deploy them, and for lots of companies the scaffolding just is not there yet.

-

Development Needs an AI-First Rewrite

I’ve spent the better part of the last two decades running or being a part of development teams, and as a developer I’m experimenting a lot with using AI tools, and I’m not alone, lots of people in the tech space are. So it comes as little surprise that these same teams are seeing the biggest impact from the ongoing shift to AI-native development.

A few weeks ago, I caught up with a dev manager friend, and without prompting, we jumped straight into a conversation about how fast everything is changing. For years, we’ve focused on building processes and procedures to help human teams collaborate and build complex software applications. But in the world of AI, the model is changing, and sometimes dramatically. What used to be a multi-person effort can now be a single human orchestrating multiple AI tools.

A perfect example: microservices.

The trend of breaking up complex applications into smaller pieces has been hot for a while now. It lets organizations build specialized teams around each part of the application and gives those teams more autonomy in how they operate. That made sense, when your team was all human.

But in an AI-first world, it can actually make things harder.

A single repository might only represent a slice of the full application. And if you’re using an AI tool to review code, it can’t easily load up the full context of how everything connects. Sure, you can help the AI out with great documentation or give it access to multiple repos, but at the core, microservices are optimized for distributed human teams. They’re not necessarily optimized for AI tools that rely on full-graph context.

That’s why, in some cases, building a more complex monolithic application may actually be a better approach for AI-native teams building with (and for) AI tools.

Another place I’ve been experimenting is in seeding AI with persistent context, so I don’t have to be overly verbose every time I assign it a dev task. I caught myself over-explaining things in prompts after the AI would do something unexpected or take an approach I didn’t want. So I started adding AI-specific README files at the root of my projects. Not for humans—for AI.

These files include architecture decisions, key concepts, and even logs of recent changes or commits. When I’m using Claude inside VS Code, for example, I sometimes hit rate limits. If I need to switch to another model like Gemini or ChatGPT to pick up the work, I don’t want to start from scratch. Those AI README files give them the full context without having to re-prompt from zero.

This is the kind of data that might be overwhelming for a human reviewer, but trivial for an AI model. And that’s part of the mindset shift. Developers are used to writing comments or notes that are for humans. But AI models benefit from verbose, detailed explanations. Concise isn’t always better.

AI also gives us an opportunity to finally shed some of the legacy technical debt that’s haunted development teams for decades. When Amazon first announced its AI assistant Q back in 2022, one of the headline features was its ability to upgrade old Java applications, moving from decades-old versions to the latest in minutes. Since then, I’ve seen multiple companies use AI to fully rewrite legacy systems into modern languages in weeks instead of years.

For some of the best programmers I know, this is a little distressing. They see code as craft, as poetry. And just like writers grappling with ChatGPT, the idea that AI can churn out working code with no elegance can feel like a loss. But beauty doesn’t always move the bottom line. Sure, I could pay top dollar for a handcrafted implementation. But the okay code produced by an AI tool that works, ships faster, and gets us to revenue or cost savings sooner? That’s usually the better trade.

And it’s not just the dev process, it’s entire products on the line. My friend put it bluntly: they used to rely on a range of SaaS tools because it wasn’t worth the effort to build them in-house. But now? A decent engineer with AI support can quickly build tools that used to require a paid subscription. That’s an existential threat for SaaS platforms that haven’t built a deep enough moat.

Take WordPress, for example. I know developers who used to rely on dozens of plugins, anything from SEO tweaks to form builders to table generators. Today, with AI support, they’re writing that functionality directly into themes or making their own plugins with AI. It’s faster, lighter, and more tailored to what they need. The value of external tools or those one-line NPM packages we used to reach for just to save time starts to diminish when you’ve got an army of AI bots doing the work for you. The calculus changes.

I always say in my talks that this is still the AOL phase of AI, it’s early. But what’s happening inside development teams is a preview of what’s coming for every other function in the business. We’ve spent decades refining human-first processes that work across teams, tools, and orgs. Now we’re going to have to rethink those processes, one by one, for an AI-first future.

-

🧠 AI is the Great Equalizer and the Ultimate Multiplier

OpenAI just released its first productivity report, based on real-world deployments of AI tools across consulting firms, government agencies, legal teams, and more. The results? Proof that AI isn’t just speeding up tasks, it’s fundamentally shifting how work stuff done.

- Consulting: AI made consultants 25% faster, completing 12% more tasks with 40% higher quality. The biggest gains? Lower performers, up 43%.

- Legal services: Productivity jumped 34% to 140%, especially in complex work like persuasive writing and legal analysis.

- Government: Pennsylvania state workers saved 95 minutes per day, a full workday back every week, by using AI tools.

- Education: U.S. K–12 teachers using AI saved nearly 6 hours per week, the equivalent of six extra teaching weeks a year.

- Customer service: Call center agents became 14% more productive, with junior staff seeing the biggest gains.

- Marketing: Content creators using AI saved 11+ hours per week on copy, ideas, and assets.

These aren’t just stats. They’re signals that AI doesn’t just help people work faster. We’re entering a moment where tools do more than help, they amplify.

AI can take a D player and make them a B. What it does for your A and B players. It turns them into 10x or 100x power houses. Not just faster, but more scalable, and more consistent.

In my book The AI Evolution, I talk about this movement as a shift to Vibe Teams. Small, AI-augmented groups that pair human strategy with agentic execution. They’re cross-functional. They move fast. They scale without headcount. And they don’t just adopt AI, they build with it.

-

🧠 The Fraud Risk No One’s Ready For

I agree with Sam Altman, AI-powered deepfakes and voice cloning are one of the most alarming risks we’re facing right now.

Here in Baltimore, we’ve already seen how real this can get. Last year, a Pikesville high school principal was accused of making racist comments—until it came out that the entire recording was a voice clone. After that, I experimented with cloning my own voice and doctoring a video of myself. It wasn’t perfect, but it was close enough to be unsettling.

Systems that rely on voice to ID customers just aren’t safe anymore. Tools like ElevenLabs have made cloning voices frighteningly easy, and if you’ve ever said a few words online or been recorded at an event, chances are you’ve given someone enough data to copy you.

Scammers are already using these tools in the wild, and combined with the mountain of personal info already floating around online, it’s easy to imagine how these attacks are getting harder to detect. Security training used to teach us that phishing emails were easy to spot. Look for misspellings, weird tone, bad grammar. But now? The grammar’s perfect. The details are specific. And the sender sounds like your boss, your partner, or your kid.

So what can you do? Start with the basics. And remember, it’s not just about locking down your own account. Train your team. Talk to your family. Assume your voice and your data are already out there, and focus on making it harder for attackers to weaponize them.

Use multi-factor authentication. Slow down before responding to anything unusual. And always, always, verify through a second channel if something feels off. No one’s going to get upset if you hang up and call them back. Or shoot them a text to double-check. That extra step might be the thing that saves you from all this mess.

-

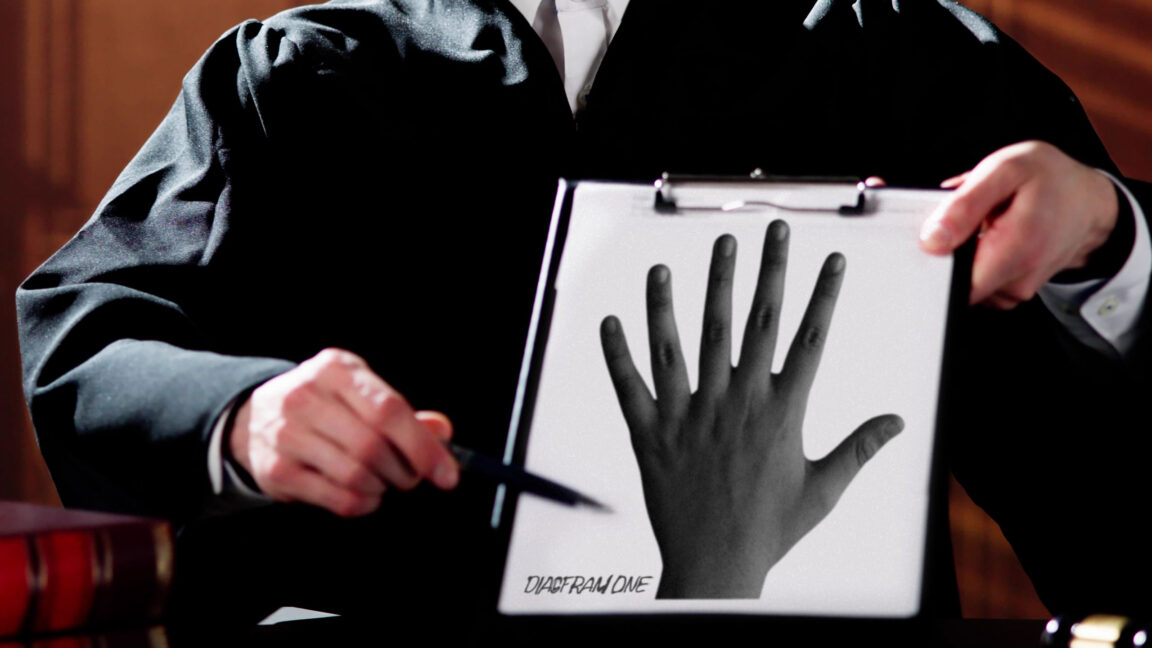

🧠 Not All Citations Can Be Trusted

If you’re not familiar with the term “hallucination,” it’s what happens when an AI confidently gives you an answer… that’s completely made up. It might sound convincing. It might even cite a source. But sometimes, a quick Google search is all it takes to realize that study, case, or quote never existed.

That’s the scary part. And it’s not going away.

In fact, hallucinations are getting worse, not better. And the bigger issue is that AI is now quietly woven into the background of nearly everything we read: articles, presentations, reports, sometimes even court filings.

That’s why I think we’ll start seeing a shift where citations aren’t just taken at face value anymore. Judges, editors, and program officers need to start asking: “Did you actually check this, is this citation real?”

The good news? There’s a growing wave of tools designed to help with exactly that:

- Sourcely scans your writing and suggests credible, traceable sources, or flags weak ones that don’t hold up.

- Scite Assistant reviews your citations and shows whether later research supports or contradicts the claim.

- Perplexity isn’t perfect, but I often use it as a quick smell test when an AI-generated response feels a little too slick.

- LegalEase, Westlaw, and other legal tools are already helping firms and courts catch citation errors before they make it into the record.

Just because a model says it, and even if it drops a polished citation, doesn’t mean it’s true. We used to say, “Just take a look, it’s in a book.” Now? You better take a second look, and make sure that book actually exists.

If your work is making claims – especially ones tied to legal precedent, public health, or science – then checking those claims has to become part of the workflow.

Truth still matters. And in the age of generative content, fact-checking is the new spellcheck.

-

The Problem with Data

Everyone has the same problem, and its name is data. Nearly every business functions in one of two core data models:

- ERP-centric: One large enterprise system (like SAP, NetSuite, or Microsoft Dynamics) acts as the hub for inventory, customers, finance, and operations. It’s monolithic, but everything is in one place.

- Best-of-breed: A constellation of specialized tools – Salesforce or HubSpot for CRM, Zendesk for support, Shopify or WooCommerce for commerce, QuickBooks for finance – all loosely stitched together, if at all.

In reality, most businesses operate somewhere in between. One system becomes the “system of truth,” while others orbit it, each with its own partial view of the business. That setup is manageable until AI enters the picture.

AI is data-hungry. It works best when it can see across your operations. But ERP vendors often make interoperability difficult by design. Their strategy has been to lock you in and make exporting or connecting data expensive or complex.

That’s why more organizations are turning to data lakes or lakehouses, central repositories that aggregate information from across systems and make it queryable. Platforms like Snowflake and Databricks have grown quickly by helping enterprises unify fragmented data into one searchable hub.

When done well, a data lake gives your AI tools visibility across departments: product, inventory, sales, finance, customer support. It’s the foundation for better analytics and better decisions.

But building a good data lake isn’t easy. I joke in my book The AI Evolution, a bad data lake is just a data swamp, a messy, unstructured dump that’s more confusing than helpful. Without a clear data model and strategy for linking information, you’re just hoarding bytes.

Worse, the concept of data lakes was designed pre-AI. They’re great at storing and querying data, but not great at acting on it. If your AI figures out that you’re low on Product X from Supplier Y, your data lake can’t place the order; it can only tell you.

This is where a new approach is gaining traction: API orchestration. Instead of just storing data, you build connective tissue between systems using APIs, letting AI both see and do across tools. Think of it like a universal translator (or Babelfish): systems speak different languages, but orchestration helps them understand each other.

For example, say HubSpot has your customer data and Shopify has your purchase history. By linking them via API, you can match users by email and give AI a unified view. Better yet, if those APIs allow actions, the AI can update records or trigger workflows directly.

Big players like Mulesoft are building enterprise-grade orchestration platforms. But for smaller orgs, tools like Zapier and n8n are becoming popular ways to connect their best-of-breed stacks and make data more actionable.

The bottom line: if your data lives in disconnected systems, you’re not alone. This is the reality for nearly every business we work with. But investing in data cleanup and orchestration now isn’t just prep, it’s the first step needed to truly unlock the power of AI.

That’s exactly why we built the AI Accelerator at PerryLabs. It’s designed for companies stuck in this in-between state where the data is fragmented, the systems don’t talk, and the AI potential feels just out of reach. Through the Accelerator, we help you identify those key data gaps, unify and activate your systems, and build the orchestration layer that sets the stage for real AI performance. Because the future of AI isn’t just about having the data—it’s about making it usable.